What to Do with Localized Errors

Last week we found the easiest way to retrieve localized context from an error. This gives us three values to play with:

- Description

- Failure Reason

- Recovery Suggestion

But how do we use these to effectively communicate an error to our users?

To figure it out, we should start by looking at the invariant — the one thing out of our power to control; the values native frameworks give to their errors. Here’s a small list:

Apple Framework Examples

Network Connection without Entitlement:

- Description: ‘The operation couldn’t be completed. Operation not permitted’

- Reason: ‘Operation not permitted’

- Recovery: N/A

Network Timeout:

- Description: ‘The request timed out.’

- Reason:N/A

- Recovery:N/A

Network is Offline:

- Description: ‘The Internet connection appears to be offline.’

- Reason:N/A

- Recovery:N/A

File Not Found:

- Description: ‘The file “hello” couldn’t be opened because there is no such file.’

- Reason: ‘The file doesn’t exist.’

- Recovery:N/A

Bad File URL:

- Description: ‘The file couldn’t be opened because URL type http isn’t supported.’

- Reason: ‘The specified URL type isn’t supported.’

- Recovery:N/A

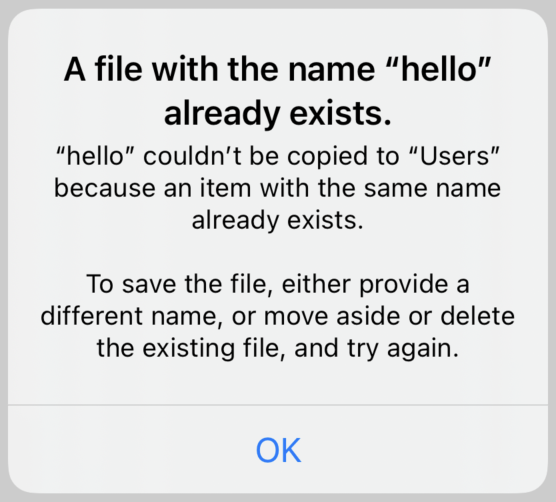

File exists:

- Description: ‘“hello” couldn’t be copied to “Users” because an item with the same name already exists.’

- Reason: ‘A file with the name “hello” already exists.’

- Recovery: ‘To save the file, either provide a different name, or move aside or delete the existing file, and try again.’

Localized Lessons

The first thing we learn is that these values are not supported equally across Apple’s libraries. While there’s always a description present, the recovery suggestion in particular is rarely used.

But we do see a pattern. Generally, the failure reason (when present) provides a short and conscience explanation of the error. Quick and to the point.

The description often restates the failure, but goes more in-depth. It might identify the particular error or some of the values involved, for example.

Finally, if the error is specific enough that a workaround can be assumed by the library author, that is written up and given in the recovery suggestion.

Hi, Alert

These usages suggest an easy mapping with the standard Alert dialog.

First we cast the incoming error as an NSError. Then we derive a title from its failure reason (or substitute a default title in case there’s no reason specified):

let bridge = myError as NSError

let title =

bridge.localizedFailureReason

?? "An Error Occurred"

Then we take the description and the recovery suggestion (if it exists) and create a message body by joining them together with a space between:

let message = [

bridge.localizedDescription,

bridge.localizedRecoverySuggestion,

]

.compactMap { $0 }

.joined(separator: "\n\n")

Note that we don’t need to give a default here. The localized description of an NSError will never be empty. And if there’s no recovery suggestion, we just don’t add it.

Once we have a our title and message, we can pass them along to our alert controller:

let alert = UIAlertController(

title: title,

message: message,

preferredStyle: .alert

)

When we present it, it’ll look something like:

Breaking with Tradition

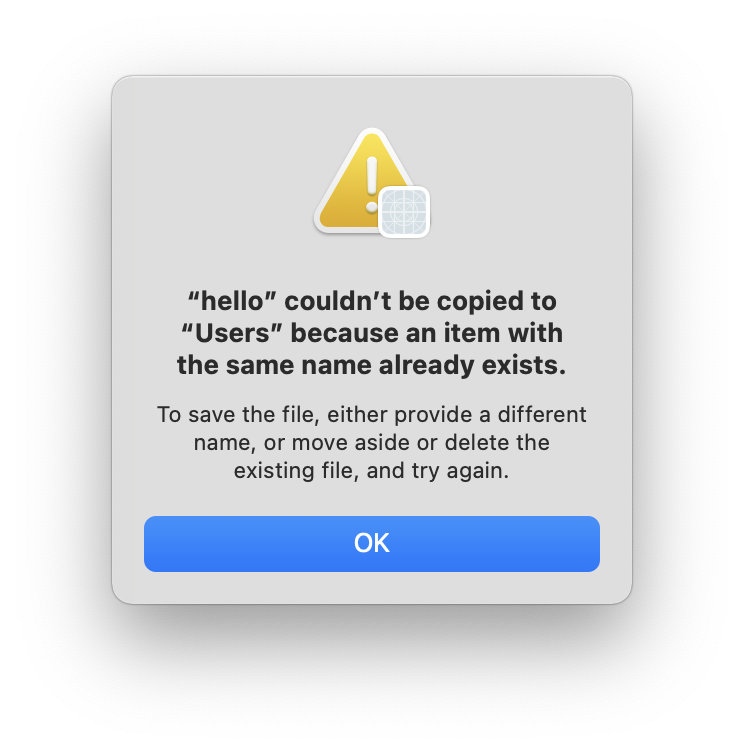

To some long-time Cocoa developers, this may look a little strange. After all, AppKit provides a presentError(_:) method on NSWindow that uses the description as the title and only renders a message if there’s a recovery suggestion given:

Why not adopt this style ourselves? We’ll talk about this more next week when we dissect how to write a good error.